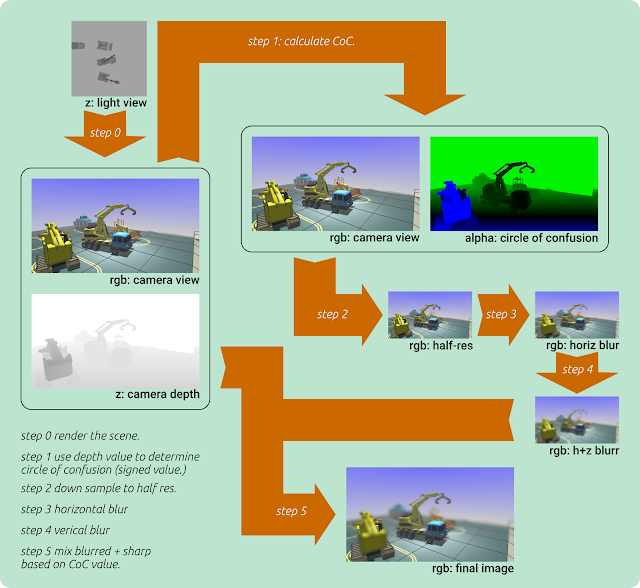

A year ago or so, I added shallow depth of field rendering to my render engine for Little Crane 2. Because the details were getting vague, I had to refresh my mind. To do this, I've charted the flow of my render steps. I call them steps, not passes, because most of them involve rendering just four vertices for a full screen quad. The actual passes are a shadow-map generation pass over the models (not shown) followed by the standard render pass (step 0) that renders into an off screen buffer.

Step 1, 2, 3 and 4 all render into their own off screen buffer, and the final step 5 renders to the screen/window.

In step 1, the input image is copied but in the alpha channel, a Circle-of-Confusion size is stored. To calculate this size, the depth of the pixel is compared to the focal distance. This CoC size is a signed value which controls the blurriness. The value is 0.0 when it is perfectly in focus, it is -1 if it is in the foreground and requires maximum blur. When it is +1, it is also maximally blurred, but this time because it is in the far background. Intermediate results are for moderately blurred pixels.

In step 2, we simply down sample the input to half resolution (this increases blur sizes, and saves render cycles. Not shown is that it also contains the down sampled alpha channel with CoC values.

In step 3 and 4 we blur the input with 7 taps. First in a horizontal direction, and later in a vertical direction. This smears out the pixels over a 7x7 (49) pixel area with only 14 samples. The blur algorithm requires some thought though: the weights of the samples need to be adapted to the situation. This is because foreground pixels can be blurred over pixels that are in focus. But background pixels cannot be blurred over the in-focus pixels, because the blurred background is occluded by the objects in focus.

In step 5, we simply mix the blurred and the sharp versions of the image based on the circle-of-confusion value. If the pixel is in focus, no blurred pixels get mixed in. If the pixel is in the far back or in the far front, only the blurred pixel makes it to the screen, and the value of the sharp pixel is mixed out.